The Architecture-First Approach

How to build production systems with AI at 10x velocity—while maintaining quality

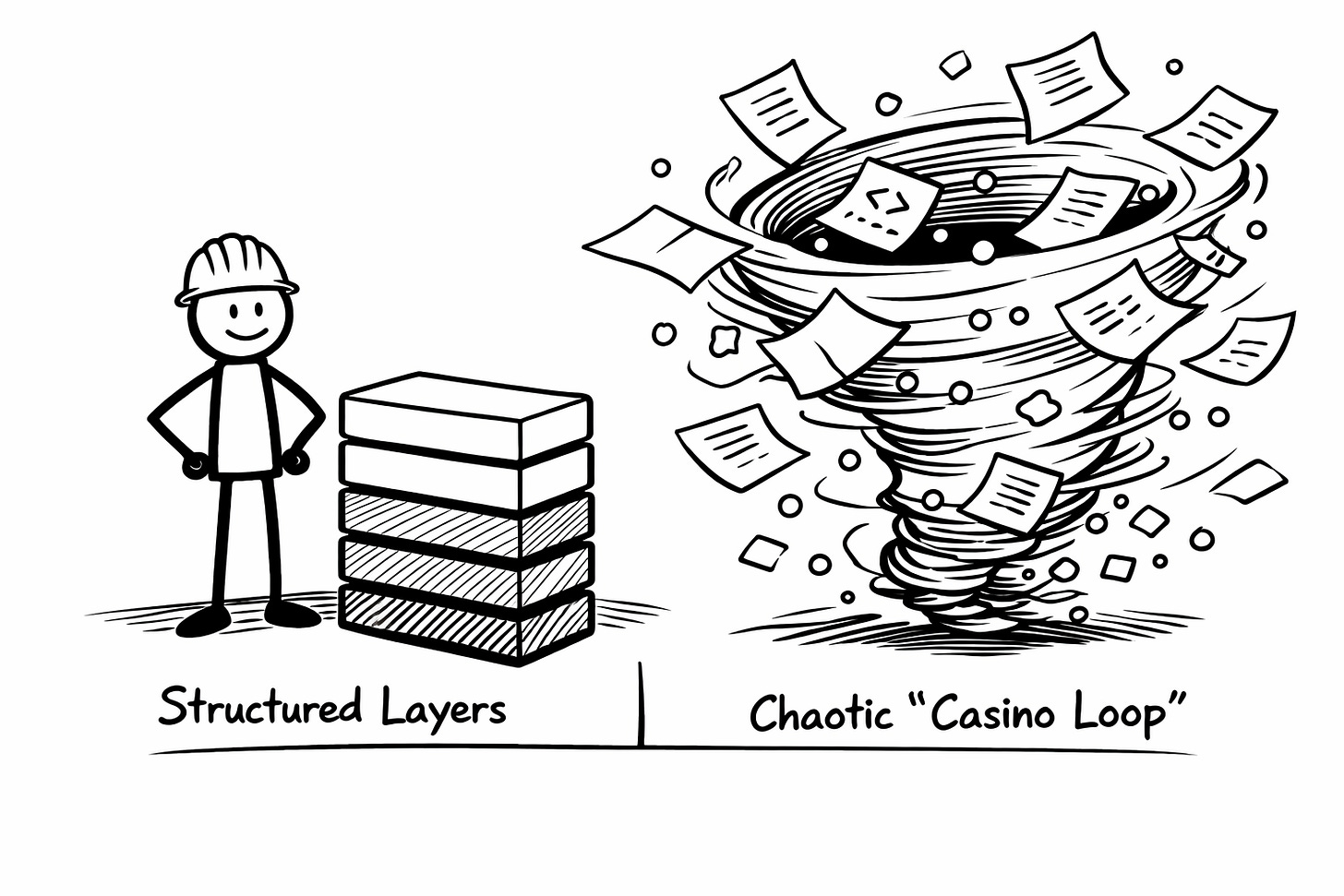

Most engineers using AI tools fall into two traps: the endless iteration “casino loop” or treating AI like a junior developer who needs constant supervision. The solution isn’t better AI—it’s better methodology: define your architecture first, decompose it into small work items, then let AI implement each piece while you maintain the system vision.

The Architecture-First Approach

I recently built an MCP server and agent— around 5,000 lines of production-quality Go code, complete with tests—in about 5 hours. Not a prototype. Not a proof-of-concept that would need to be rewritten. Production code.

This wasn’t AI magic. This was engineering discipline, accelerated.

For 20 years, I’ve built production systems: telecommunications infrastructure, ML platforms, distributed networks. The process has always been the same: envision the system, design the architecture, decompose the complexity, implement the pieces, validate the results. The only difference now is that Claude writes the code instead of me.

This represents something I haven’t seen many engineers articulate yet: a third way between “AI will write everything magically” and “AI coding tools are just glorified autocomplete.” It’s about experienced engineers using AI as a force multiplier—maintaining judgment and process while scaling execution velocity by an order of magnitude.

The Problem with Current Approaches

Most developers I observe working with AI coding tools fall into one of two traps.

The first trap is the “casino loop.” They iterate endlessly with the AI, making small tweaks, running the code, tweaking again. It feels productive—you’re generating code, the screen is filling up—but you’re not building a system. You’re spinning in place. After hours of this, you’re exhausted, the codebase is a mess of conflicting patterns, and you have no clear path forward.

The numbers bear this out. While 90% of teams now use AI tools—up from just 61% a year ago—75% of engineers report their organizations see no measurable performance gains [1]. More striking: controlled studies found that allowing AI assistance actually increased completion time by 19% among experienced developers [2]. This isn’t a failure of the technology. It’s a failure of methodology.

The second trap is treating AI like a junior developer who needs constant supervision. You write detailed pseudocode, review every line it generates, and spend more time explaining than you would have spent just writing the code yourself. The AI becomes overhead, not leverage.

Both approaches miss what AI coding tools actually enable: the ability to maintain your senior-level architecture and design decisions while delegating the mechanical work of implementation.

What Changed My Approach

I didn’t start with a methodology. I started with frustration.

Early on, I’d ask Claude to build something, it would generate code, I’d find problems, ask for fixes, find more problems. The context window would fill up with iterations. I’d lose track of what actually worked. Sound familiar?

One day, after yet another exhausting session, I stepped back. What was I doing differently when things went well versus when they didn’t?

The pattern became clear: When I succeeded, I’d already done the hard thinking. I knew what I was building. I had the architecture in my head. I was asking Claude to implement specific, well-defined pieces. When I failed, I was asking Claude to figure out what to build while also building it.

This realization changed everything. The AI isn’t a peer who can collaborate on architecture. It’s an implementation machine that works best when you’ve already made the hard decisions.

So I stopped trying to use AI as a on-the-fly thinking partner for design. I used it as an execution partner for implementation. Architecture first, then code generation.

The Layered Generation Method

I found myself following a pattern. Not consciously at first, but after building a few systems this way, the structure became clear.

Layer 1: Vision Before Code

I started with clear intent to build an MCP server that evaluates mathematical expressions. Not “build a calculator”—that’s too vague. I worked with AI to define the system boundaries, the interfaces, the success criteria in a “vision” document. This took maybe 30 minutes, and it turned out to be the most important 30 minutes of the project.

This is where your experience matters most. You’re not asking AI what to build—you know what to build. You’re preparing to use AI to build it faster.

Layer 2: Architecture Document

Before any code generation, I wrote the wrote an “architecture” document. Not a detailed design doc with every class and method, but the structural decisions: the core calculator logic is separate from the MCP protocol layer, both can be tested independently, the evaluator interface allows swapping between local and remote computation.

This is human work. AI can’t make these decisions because they require understanding trade-offs, anticipating future changes, and making judgment calls about what matters. Robert C. Martin introduced Clean Architecture on this principle: dependencies point inward, outer layers depend on inner layers, never the reverse [3]. Separation of concerns is so foundational that 2 of the 5 SOLID principles derive directly from it [4].

Your job is to make these architectural calls. The AI’s job comes later.

Layer 3: Structured Decomposition

Here’s where the methodology becomes concrete. I broke the architecture into discrete, implementable pieces. Not todos like “implement calculator”—that’s still too big. Instead: “implement tokenizer that handles operators and parentheses,” “implement operator precedence rules,” “implement error handling for invalid expressions.”

I use Beads, Steve Yegge’s git-backed issue tracker, for this. Simple commands: bd create to make an issue, bd list to see all existing issues, bd show <id> to see details of a specific issue, bd ready to find available work. Each piece becomes an issue. Each issue is small enough that the AI can implement it completely in one interaction.

This matters more than you might think. Your working memory can only hold 4-5 items at once [5]. When you try to work on something too complex, you exceed your own cognitive capacity. For my MCP server , one decomposition looked like this:

- Issue 1: Implement tokenizer for mathematical expressions

- Issue 2: Build operator precedence handler

- Issue 3: Create error handling for invalid syntax

- Issue 4: Add MCP protocol wrapper

Each was small enough that Claude could generate complete, working code in one shot. But together, they built toward the architecture I’d designed. This project became my way of learning Beads—the issue tracker enforcing the discipline that made the speed possible.

Research shows developers spend 10 times more time reading and understanding code than writing it, and 76% of organizations admit their software architecture’s cognitive burden creates developer stress [6]. Decomposition isn’t just about AI efficiency. It’s about keeping yourself oriented as the system grows.

Layer 4: AI-Driven Implementation

Now the AI does what it’s genuinely excellent at: taking a well-defined piece and generating clean, working code. Give Claude a clear specification—”implement a tokenizer that converts a string into a sequence of tokens representing numbers, operators, and parentheses”—and it will generate solid code.

Behind the scenes, Claude grabs the existing codebase, reads your vision and architecture documents, and uses the issue description to build the context it needs. You’re not writing prompts explaining your entire system every time. The structure you created—vision, architecture, decomposed issues—becomes the context automatically.

The difference from the casino loop is that each piece builds on stable foundations. You’re not asking the AI to figure out how pieces connect. You’ve already decided that. You’re asking it to implement one piece at a time.

Layer 5: Test, Validate, Next Layer

After each implementation, I run the tests. If something doesn’t work, I either refine the issue specification (my fault) or ask the AI to fix the specific problem (its fault). Then I move to the next issue.

This creates a virtuous cycle. Each completed issue becomes context for the next one. The codebase grows in a controlled, layered fashion. There’s no moment where I’ve lost track of what exists or how it fits together.

Layer 6: Maintain the Architecture

As the system grows, I occasionally pause to ensure the implementation still matches the architecture. If I notice drift, I either update the architecture (the implementation revealed something) or write new issues to bring the code back in line (the implementation strayed).

This is another place where human judgment is essential. AI will happily generate code that works locally but violates your architectural principles. You need to catch that.

Why This Works

It took me a while to understand why this felt different from both traditional development and the casino loop.

I think that the layered approach worked for me because it separates concerns properly:

Human responsibilities:

- Architectural decisions

- Problem decomposition

- Quality standards

- Integration points

AI responsibilities:

- Code generation

- Boilerplate

- Test implementation

- Documentation

The human maintains the mental model of the entire system. The AI implements pieces of it, but never needs to understand the whole. This is actually a strength—it means the AI can focus on making each piece excellent without getting distracted by the larger context.

Managing Cognitive Load

One insight that surprised me was that decomposing work into issues doesn’t just help the AI generate better code. It helps me maintain focus.

When you’re building a complex system, the hardest part isn’t writing any individual piece—it’s keeping track of what’s done, what’s next, and how everything connects. You know, “the flow”. The casino loop happens when you lose that mental model and start reacting to whatever problem appears next.

By externalizing the work plan into concrete issues, I never lost track of where I was. I always knew: “I’m implementing the evaluator interface. After this, I’ll add error handling for edge cases. Then I’ll build the MCP server layer.”

This is the opposite of the chaos that many developers experience with AI tools. It’s more structured than traditional development, not less.

The Production Quality Question

You might be wondering about quality. I was too, initially.

The concern is legitimate. Research shows 40% of organizations lose more than $1 million annually due to poor software quality [7]. Test coverage, defect density, and mean time to detect are not nice-to-haves—they’re what separate production systems from prototypes [8].

In the case of my MCP server the tests pass, the code is clean, the architecture is sound. I can hand this codebase to another engineer and they’ll understand it. That’s the standard.

This didn’t happen automatically. It happened because I:

1. Defined quality standards before generation

2. Verified each piece before moving on

3. Wrote tests that validated behavior, not just syntax

4. Maintained architectural consistency throughout

The AI generated the code, but I ensured it met production standards. That’s a distinction worth preserving.

Who This Works For

This methodology is most effective for engineers who already know how to build production systems. If you understand system design, testing strategies, and software architecture, AI coding tools become force multipliers.

The data supports this. Companies with high AI adoption see an average 113% increase in PRs per engineer and a 24% reduction in cycle time [9]. But here’s what matters: senior and experienced developers gain the most from AI—when they use proper methodology [10]. Without methodology, even experienced developers see slowdowns.

If you’re still building those mental models of how systems fit together, AI might actually slow you down—and that’s not a failure, it’s just where you are. The technology amplifies what you bring to it. Experience in architecture, decomposition, and quality evaluation becomes more valuable, not less.

The Future of Development

I think that what effective AI-assisted development will look like is that experienced engineers are maintaining architectural control while AI handles the mechanical work of implementation. This is NOT AI replacing engineers, but AI making experienced engineers vastly more productive.

The engineers who thrive in this environment will be those who can:

- Design clean architectures quickly

- Decompose problems into implementable pieces

- Evaluate code quality at a glance

- Maintain system coherence across layers

These are timeless engineering skills. AI doesn’t make them less important—it makes them more valuable.

The widening gap we’re seeing between junior and senior engineers isn’t about AI replacing one group. It’s about AI amplifying the value of experience. A senior engineer with AI can do the work of a team. A junior engineer with AI might generate impressive-looking code that doesn’t actually work in production.

What does this mean for you? If you’ve built production systems, if you know how to decompose complexity, if you can evaluate architectural tradeoffs—this is your moment. This methodology is a learnable skill.

And if you haven’t built that foundation yet? Build it now, before AI becomes the only way people code. Learn architecture. Practice decomposition. Understand what makes code production-ready. Those skills will serve you whether you’re working with AI or without it.

What methodology are you using for AI-assisted development? What patterns have you noticed in what works versus what doesn’t? I’m still learning this, still refining the approach. I’d be interested to hear what’s working for you.

REFERENCES

[1] Jellyfish (2025). 2025 AI Metrics in Review: What 12 Months of Data Tell Us About Adoption and Impact. https://jellyfish.co/blog/2025-ai-metrics-in-review/

[2] METR (2025). Measuring the Impact of Early-2025 AI on Experienced Open-Source Developer Productivity. https://metr.org/blog/2025-07-10-early-2025-ai-experienced-os-dev-study/

[3] Martin, R. C. (2012). The Clean Architecture. Clean Coder Blog. https://blog.cleancoder.com/uncle-bob/2012/08/13/the-clean-architecture.html

[4] Medium (2024). Using Clean Architecture to ensure Separation of Concerns. https://medium.com/@dorinbaba/using-clean-architecture-to-ensure-separation-of-concerns-c4a9b7d8f0c1

[5] Cowan, N. (2001). The magical number 4 in short-term memory: A reconsideration of mental storage capacity. Behavioral and Brain Sciences, 24(1).

[6] Agile Analytics (2025). Reducing Developer Cognitive Load in 2025: Strategies for Faster, Smarter Software Delivery. https://www.agileanalytics.cloud/blog/reducing-cognitive-load-the-missing-key-to-faster-development-cycles

[7] Cortex (2025). 18 Software Quality Metrics to Consider Tracking in 2025. https://www.cortex.io/post/software-quality-metrics

[8] Umano Tech (2025). 7 Software Quality Metrics to Track in 2025. https://blog.umano.tech/7-software-quality-metrics-to-track-in-2025

[9] Index (2026). Top 100 Developer Productivity Statistics with AI Tools 2026. https://www.index.dev/blog/developer-productivity-statistics-with-ai-tools

[10] Enreap (2026). AI-Driven Developer Productivity in 2026: Key Lessons from 2025 for Engineering Leaders. https://enreap.com/ai-driven-developer-productivity-in-2026-key-lessons-from-2025-for-engineering-leaders/